mirror of

https://github.com/openai/whisper.git

synced 2025-11-29 08:28:53 +00:00

General improvements

This commit is contained in:

parent

90db0de189

commit

d5636d387d

10

README.md

10

README.md

@ -5,19 +5,19 @@

|

||||

[[Model card]](https://github.com/openai/whisper/blob/main/model-card.md)

|

||||

[[Colab example]](https://colab.research.google.com/github/openai/whisper/blob/master/notebooks/LibriSpeech.ipynb)

|

||||

|

||||

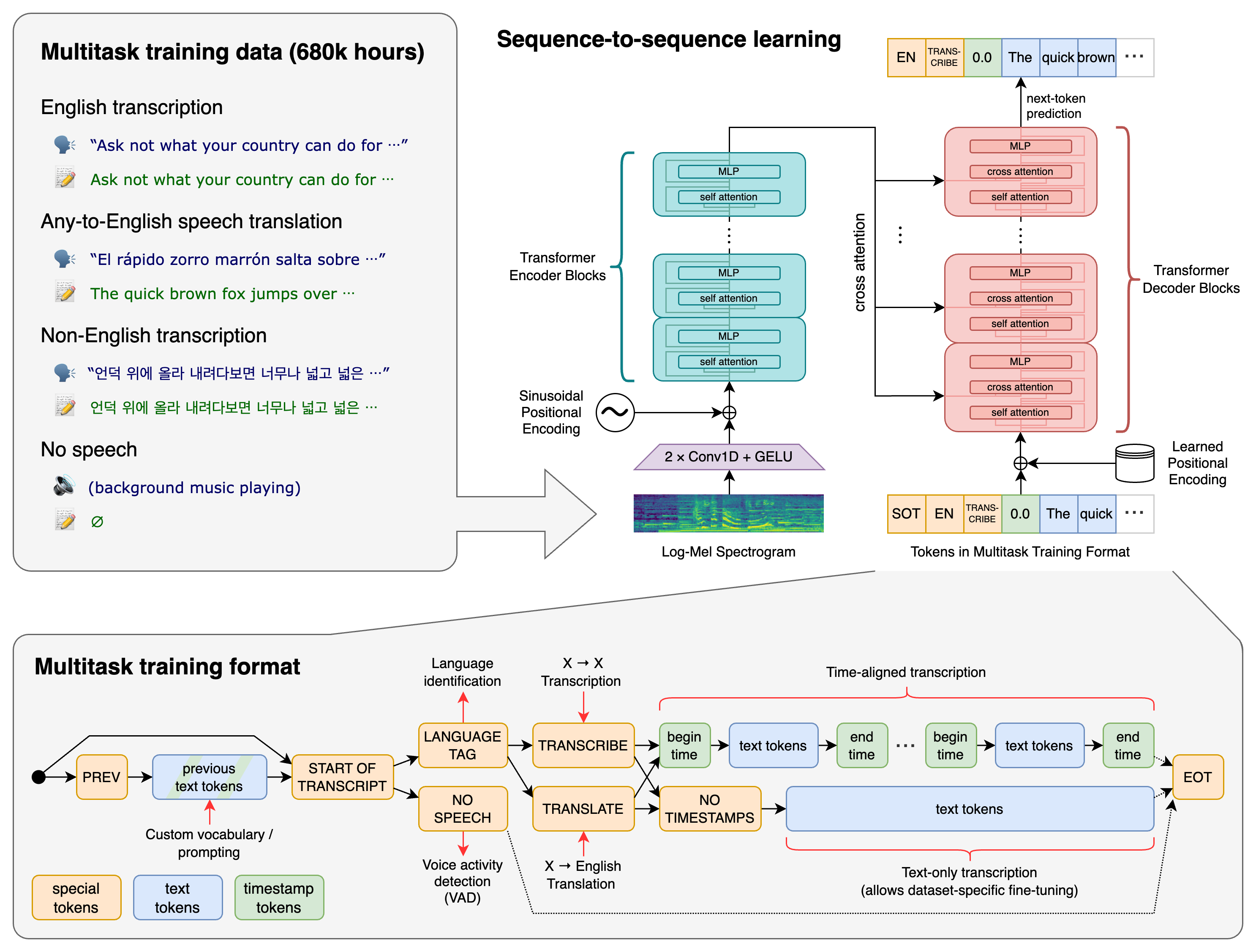

Whisper is a general-purpose speech recognition model. It is trained on a large dataset of diverse audio and is also a multitasking model that can perform multilingual speech recognition, speech translation, and language identification.

|

||||

Whisper is a general-purpose speech recognition model. It is trained on a large dataset of diverse audio. It is also a multitasking model that can perform multilingual speech recognition, speech translation, and language identification.

|

||||

|

||||

|

||||

## Approach

|

||||

|

||||

|

||||

|

||||

A Transformer sequence-to-sequence model is trained on various speech processing tasks, including multilingual speech recognition, speech translation, spoken language identification, and voice activity detection. These tasks are jointly represented as a sequence of tokens to be predicted by the decoder, allowing a single model to replace many stages of a traditional speech-processing pipeline. The multitask training format uses a set of special tokens that serve as task specifiers or classification targets.

|

||||

A Transformer sequence-to-sequence model is trained on various speech processing tasks, including multilingual speech recognition, speech translation, spoken language identification, and voice activity detection. These tasks are represented together as a sequence of tokens to be predicted by the decoder. This allows a single model to replace many stages of a traditional speech-processing pipeline. The multitask training format uses a set of special tokens that serve as task specifiers or classification targets.

|

||||

|

||||

|

||||

## Setup

|

||||

|

||||

We used Python 3.9.9 and [PyTorch](https://pytorch.org/) 1.10.1 to train and test our models, but the codebase is expected to be compatible with Python 3.8-3.11 and recent PyTorch versions. The codebase also depends on a few Python packages, most notably [OpenAI's tiktoken](https://github.com/openai/tiktoken) for their fast tokenizer implementation. You can download and install (or update to) the latest release of Whisper with the following command:

|

||||

We used Python 3.9.9 and [PyTorch](https://pytorch.org/) 1.10.1 to train and test our models. The codebase should also be compatible with Python 3.8-3.11 and recent PyTorch versions. The codebase also depends on a few Python packages, most notably [OpenAI's tiktoken](https://github.com/openai/tiktoken) for their fast tokenizer implementation. You can download and install (or update to) the latest release of Whisper with the following command:

|

||||

|

||||

pip install -U openai-whisper

|

||||

|

||||

@ -59,7 +59,7 @@ pip install setuptools-rust

|

||||

|

||||

There are six model sizes, four with English-only versions, offering speed and accuracy tradeoffs.

|

||||

Below are the names of the available models and their approximate memory requirements and inference speed relative to the large model.

|

||||

The relative speeds below are measured by transcribing English speech on a A100, and the real-world speed may vary significantly depending on many factors including the language, the speaking speed, and the available hardware.

|

||||

The relative speeds below are measured by transcribing English speech on a A100. The real-world speed may vary significantly depending on many factors including the language, the speaking speed, and the available hardware.

|

||||

|

||||

| Size | Parameters | English-only model | Multilingual model | Required VRAM | Relative speed |

|

||||

|:------:|:----------:|:------------------:|:------------------:|:-------------:|:--------------:|

|

||||

@ -142,7 +142,7 @@ print(result.text)

|

||||

|

||||

## More examples

|

||||

|

||||

Please use the [🙌 Show and tell](https://github.com/openai/whisper/discussions/categories/show-and-tell) category in Discussions for sharing more example usages of Whisper and third-party extensions such as web demos, integrations with other tools, ports for different platforms, etc.

|

||||

Use the [🙌 Show and tell](https://github.com/openai/whisper/discussions/categories/show-and-tell) category in Discussions for sharing more example usages of Whisper and third-party extensions such as web demos, integrations with other tools, ports for different platforms, etc.

|

||||

|

||||

|

||||

## License

|

||||

|

||||

Loading…

x

Reference in New Issue

Block a user